PyTorch 0.4 does not support recurrent dropout directly.If one of the frameworks has changed in a way that should be reflected here, please let me know! This code was last updated in August 2018. word_dropout ( word_vectors ) # Run the LSTM over the input, reshaping data for efficiency word_embedding ( sentences ) # Apply dropout dropped_word_vectors = self. size ( 1 ) # Look up word vectors word_vectors = self. Linear ( LSTM_HIDDEN * 2, ntags ) def forward ( self, sentences, labels, lengths, cur_batch_size ): max_length = sentences. Dropout ( 1 - KEEP_PROB ) # Create final matrix multiply parameters self. LSTM ( DIM_EMBEDDING, LSTM_HIDDEN, num_layers = 1, batch_first = True, bidirectional = True ) # Create output dropout parameter self. Dropout ( 1 - KEEP_PROB ) # Create LSTM parameters self. from_pretrained ( pretrained_tensor, freeze = False ) # Create input dropout parameter self. _init_ () # Create word embeddings pretrained_tensor = torch. Hopefully you will find it informative too!ĭef _init_ ( self, nwords, ntags, pretrained_list, id_to_token ): super (). Making this helped me understand all three frameworks better. New (2019) Runnable Version: I have made a slightly modified version of the Tensorflow code available as a Google Colaboratory Notebook. Matching or closely related content is aligned.įramework-specific comments are highlighted in a colour that matches their button and a line is used to make the link from the comment to the code clear. Website usage: Use the buttons to show one or more implementations and their associated comments (note, depending on your screen size you may need to scroll to see all the code). The only dependencies are the respective frameworks (DyNet 2.0.3, PyTorch 0.4.1 and Tensorflow 1.9.0). The repository for this page provides the code in runnable form. The specific hyperparameter choices follows Yang, Liang, and Zhang (CoLing 2018) and matches their performance for the setting without a CRF layer or character-based word embeddings. They all score ~97.2% on the development set of the Penn Treebank.

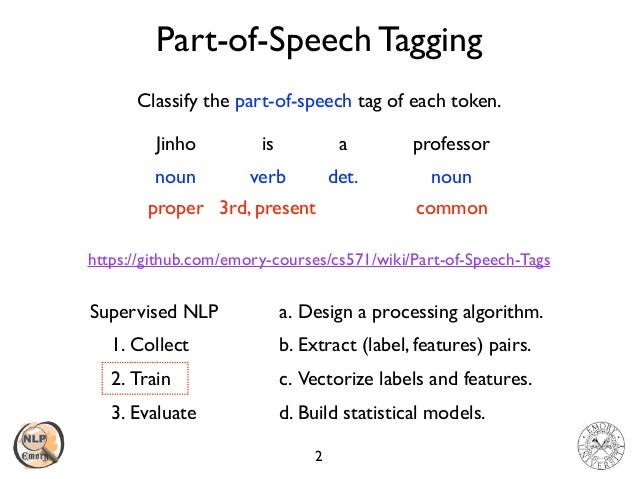

The three implementations below all define a part-of-speech tagger with word embeddings initialised using GloVe, fed into a one-layer bidirectional LSTM, followed by a matrix multiplication to produce scores for tags. The design of the code is also geared towards providing a complete picture of how things fit together.įor a non-tutorial version of this code it would be better to use abstraction to improve flexibility, but that would have complicated the flow here. The design of the page is motivated by my own preference for a complete program with annotations, rather than the more common tutorial style of introducing code piecemeal in between discussion. This page is intended to show how to implement the same non-trivial model in all three. DyNet, PyTorch and Tensorflow are complex frameworks with different ways of approaching neural network implementation and variations in default behaviour.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed